Lee Calcote is an innovator, product and technology leader, active in the community as a Docker Captain, Cloud Native Ambassador and GSoC, GSoD, and Community Bridge Mentor. In this talk, he walked through service mesh specifications and why they matter in your deployment. How many service mesh specifications do you know? He went through all of them. So, no worries if you're unfamiliar.

Service Mesh Specifications:

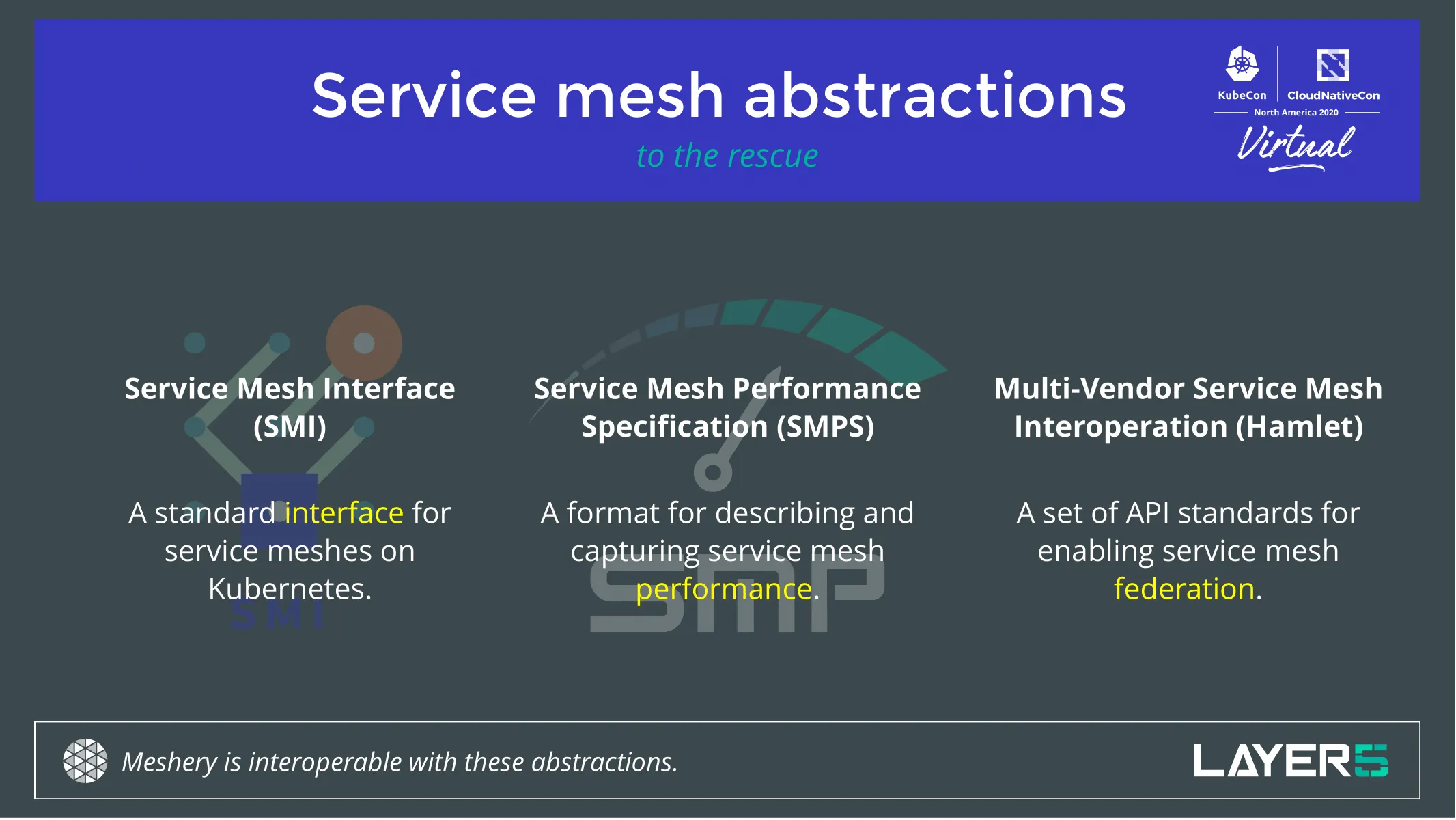

As the ubiquity of service meshes unfolds and they become a commonplace for any cloud native or edge environment, so does the need for vendor and technology-agnostic interfaces to interact with them. The Service Mesh Interface (SMI), the Service Mesh Performance (SMP), and Multi-Vendor Service Mesh Interoperation (Hamlet) are three open specifications solving the challenge of interoperability, workload and performance management between service meshes.

Learn what makes each of them unique and why they are much needed. See each of these three specifications in action as we use Meshery, the open-source service mesh management plane to demonstrate the value and functionality of each service mesh abstraction, and the adherence of these specifications by Istio, Linkerd, Consul and other popular service meshes.

Cloud native Journey to service meshes:

The advent of cloud native was the popularization of containers. Thank you Docker! From there, containers took off like wildfire. Turns out you need an orchestrator to wrangle that sprawl. We saw a number of orchestrators come and we still have a number of orchestrators around.

Service meshes have become a hot topic in the last few years. They still continue to be, rightfully so, a very powerful piece of technology. “A lot of the power is yet to come from my perspective. For my part, I believe that there is a tomorrow in which data plane intelligence really matters. And matters about how people write cloud native applications.”, Lee emphasized. Not everyone quite understands the capabilities of meshes as they are promoted and spoken about today. So come along into the journey of service mesh.

There are a number of service meshes out there. One of the community projects is to track the landscape of all of the meshes there are. There’s a lot to say about each of them, their architecture, and their working. Why are they made? Who are they focused on? What do they do? When did they come about? Why are some of them not here anymore? Why are we still seeing new ones? A lot of things to go through. You might be interested in any number of the details that the landscape tracks.

Service Mesh Interface

- Its goal and genesis were born inside of Kubernetes.

- Being a specification that is native to Kubernetes, its focus is on lowest common denominator functionality.

- The focus on bringing forth APIs that highlight and reinforce the most common use cases that service meshes are being used for currently

- Leaves space and provides extensibility room for additional APIs to address other service mesh functionality as more people adopt and make use cases well known.

- There are seven service meshes that claim compatibility with SMI. There's been a community effort, open-source effort to create service mesh conformance tests to assert whether or not a given service mesh is compatible with SMI

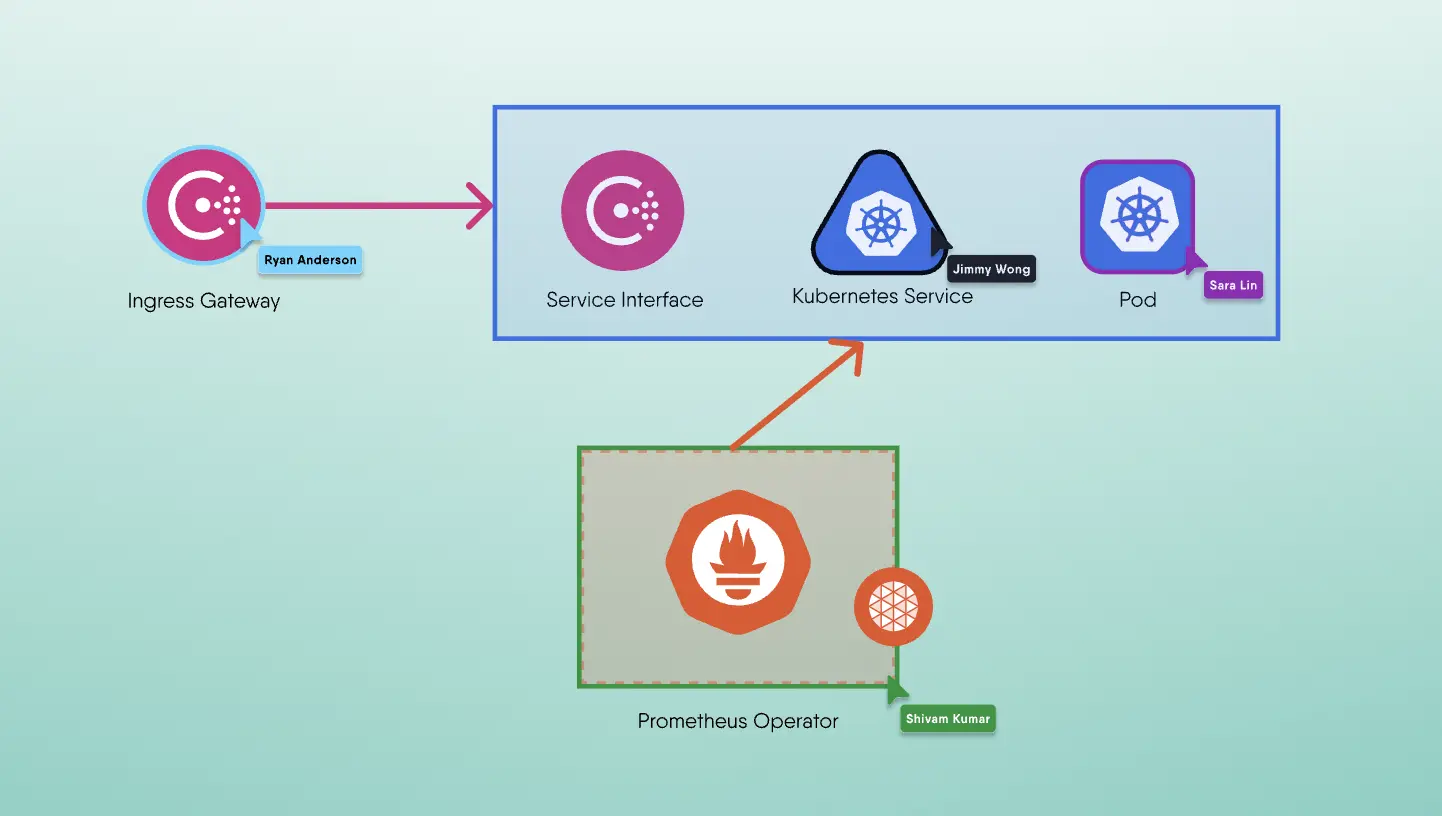

- In order to facilitate those types of tests, you need to have a tool to provision a sample application on those services which will generate load and test whether traffic splitting behaves as expected or works with that service mesh implementation properly.

- Then you need to be able to collect the results, guarantee the provenance of those results and publish them.

- As a community, we turned to Meshery as the tool to implement SMI conformance and we have been working with the individual service meshes to validate their conformance.

- We work on an open-source project called Meshery.

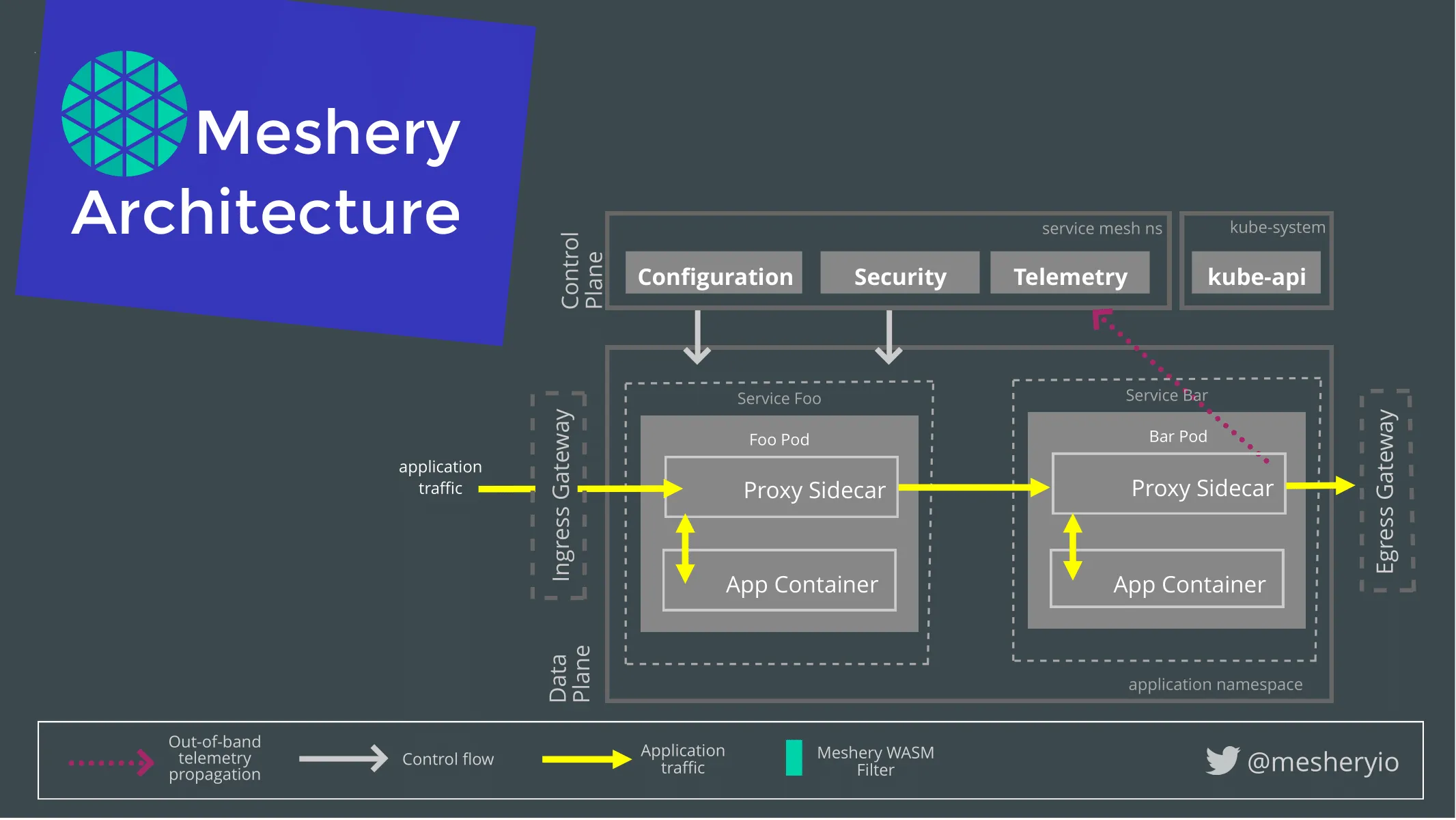

- Meshery, the cloud native management plane, is the canonical implementation of the service mesh performance.

- The management planes can do a number of things to help bridge the divide between other back-end systems and service meshes. They also help performance management, configuration management, making sure you are following best practices in your implementations by taking common patterns and applying them to your environment

- We need to spin up Meshery locally

- We use mesheryctl as the command line interface to work with Meshery.

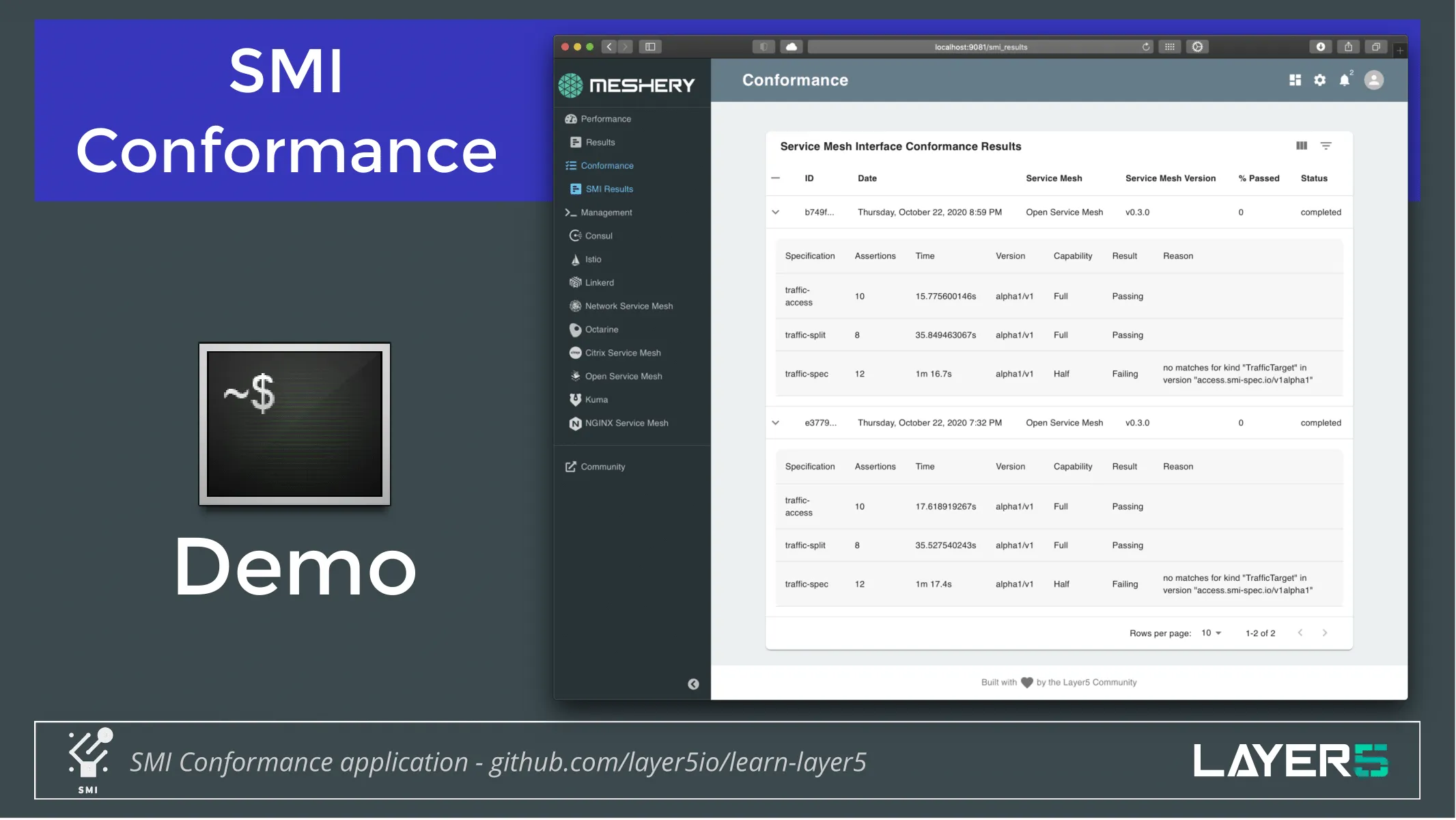

- We can interact with a number of different service mesh. The service mesh we’re going to work with today is an Open service mesh (one of those 7 that is compatible with SMI). Let’s put it to the test.

- We'll initiate SMI conformance

- These tests go and do assertions across these different specifications. We’re looking at traffic access, traffic splitting, traffic specification. Meshery then collects these results and will eventually be publishing them in combination with the SMI project.

Service Mesh Performance

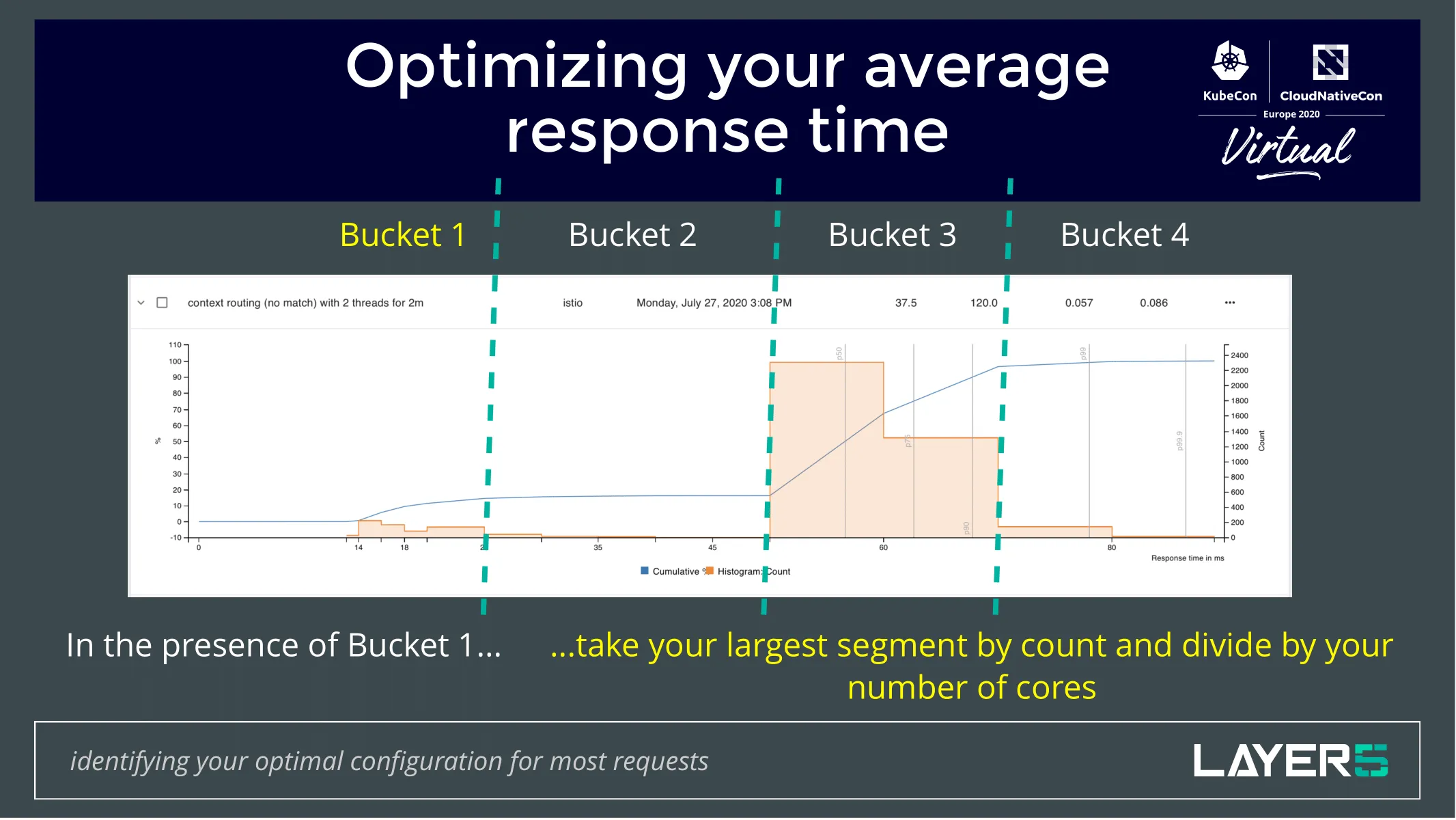

- Focused on describing and capturing the performance of a service mesh.

- The overhead of the value is another way of looking at it and characterizing it.

- Trying to characterize the performance of the infrastructure of a service mesh can be really difficult.

- Considering the number of variables that you would have to track, how difficult it can be to have repeatable tests, and benchmark your environment, to track your history based on your environment, compare performance between other meshes people need.

- SMP creates a standard way of capturing the performance of the mesh to help with these issues.

- It's also the way in which you're configuring your control plan of your service mesh.

You might be using a client library to do some service mesh functionality. Maybe you're using those in combination with the service mesh. What costs more? What's more efficient? What's more powerful? Maybe you're using web assembly and filters there. These are all open questions that SMP assists in answering in your environment. You’d be surprised by some of the results of some tests that we have done and that the community has done in combination with a couple of universities and graduate students.

Performance TestDemonstration of the implementation of service mesh Performance:

- On the terminal, we have a local deployment of Meshery running. You can also deploy on Kubernetes as well as the vendor Kubernetes platforms like AKS, EKS and GCP or you can use a dockerized container to run Meshery. You can also have your Kubernetes on Docker desktop.

- We have the Open service mesh deployed.

- The Meshery UI is exposed at 9081 port. This is the UI which is used to instantiate a Load test.

- Over here you can see we have 3 load generators fortio, wrk2, nighthawk.

- All of these load generators have their own set of attributes which they record correctly and each of its attributes have their own significance. We begin with fortio.

- You can actually download the test results or you can just browse into the Results Tab and see all of the tests which you have run until now.

- Next, we used nighthawk to generate the load and benchmark the service for the same. Nighthawk is a load generator which is maintained by the Envoy community and is relatively new. It still hasn't got its 1.0 release but right now Nighthawk has sufficient features to compete with different generators which are still in the play. It can generate a gRPC service on its own and it has some more attributes which you can expose using their CLI tools.

- You can also see that Meshery has the capability to search your environment, see what specifications are being used and what's the load on your Kubernetes.

- Jump into the results Tab and see how we compare with these results.

- You can click on the download. You will see that a yaml gets downloaded in which you can browse and see that the start time, load time, the performance latencies, the metrics are being captured.

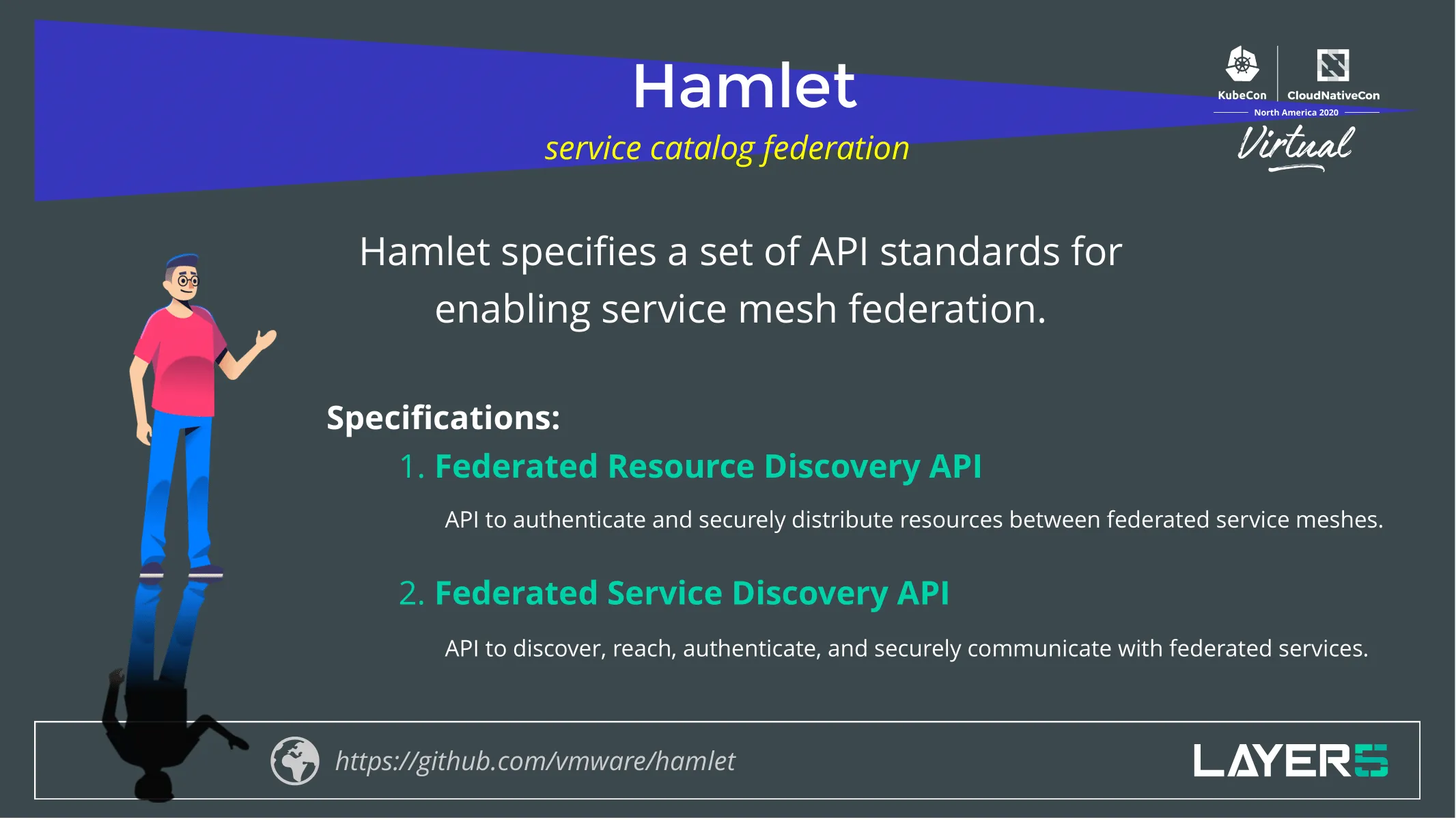

Hamlet or Multi-vendor Service Mesh Interoperation

- Focus on service mesh federation

- Specifies a set of API standards for enabling service mesh federation

- Hamlet takes on a client-server architecture in which resources and services of one service mesh are discovered, registered and using a common format, information about them is exchanged between different service mesh.

- Rules around authentication and authorization rules around which Services get exposed and to whom and who can communicate with them and whether or not they can do it securely. These are things that Hamlet addresses.

- The specification currently consists of two APIs:

- The Federated Resource Discovery API: API to authenticate and securely distribute resources between federated service meshes.

- The Federated Service Discovery API: API to discover, reach, authenticate and securely communicate with federated services.

- Part of the real power is the ability to overcome what are likely to be separate administrative domains. The intention here is to marry up connect two disparate service mesh deployments, those deployments might be of the same type, they might be of two different types.

In addition to SMI, SMP and Hamlet there has been an emergence of service mesh patterns, by which people are running and operating service meshes. There is a service mesh working group under CNCFs network that is helping identify those patterns of which there's a list right now unbeknownst to you. Reach out, join it, help us work through the 60 patterns that are defined right now. 30 of those are going into an O’Reilly book called Service Mesh Patterns.

Something that isn’t always obvious to folks is this piece of value that people get from a service mesh and actually from the specifications that we were just mentioning. It is the fact that teams are decoupled when you’re running a mesh. Developers get to iterate a bit independently of operators, and so do operators get to make changes to implement infrastructure to the way that applications behave independent of developers in the presence of a mesh. Both of these teams are significantly empowered. Everybody gets a piece of power when they deploy a mesh.

P.S.: If these topics excite come and say "Hi" on our Slack Channel and one of us will reach out to you!