API Gateways interplay with service meshes

Learn more about service mesh fundamentals in The Enterprise Path to Service Mesh Archictures (2nd Edition) - free book and excellent resource which addresses how to evaluate your organization’s readiness, provides factors to consider when building new applications and converting existing applications to best take advantage of a service mesh, and offers insight on deployment architectures used to get you there.

API gateways come in a few forms:

- Traditional (e.g., Kong)

- Cloud-hosted (e.g., Azure Load Balancer)

- L7 proxy used as an API gateway and microservices API gateways (e.g., Traefik, NGINX, HAProxy, or Envoy)

L7 proxies used as API gateways generally can be represented by a collection of microservices-oriented, open source projects, which have taken the approach of wrapping existing L7 proxies with additional features needed for an API gateway.

NGINX

As a stable, efficient, ubiquitous L7 proxy, NGINX is commonly found at the core of API gateways. It may be used on its own or wrapped with additional features to facilitate container orchestrator native integration or additional self-service functionality for developers. Examples of this include:

- APIUmbrella

- Kong

- OpenResty

Envoy

The Envoy project also has been used as the foundation for API gateways.

- Ambassador: Based on Envoy, Ambassador is an API gateway for microservices functioning stand-alone or as a Kubernetes Ingress Controller.

- Contour: Based on Envoy and deployed as a Kubernetes Ingress Controller. Hosted in the CNCF.

- Enroute: Envoy Route Controller. API Gateway created for Kubernetes ingress controller, and standalone deployments.

Other differences between traditional API gateways and microservices API gateways revolve around which team uses the gateway: operators or developers. Operators tend to measure API calls per consumer to meter and disallow API calls when a consumer exceeds its quota. Developers, on the other hand, tend to track L7 latency, throughput, and resilience, limiting API calls when the service is not responding.

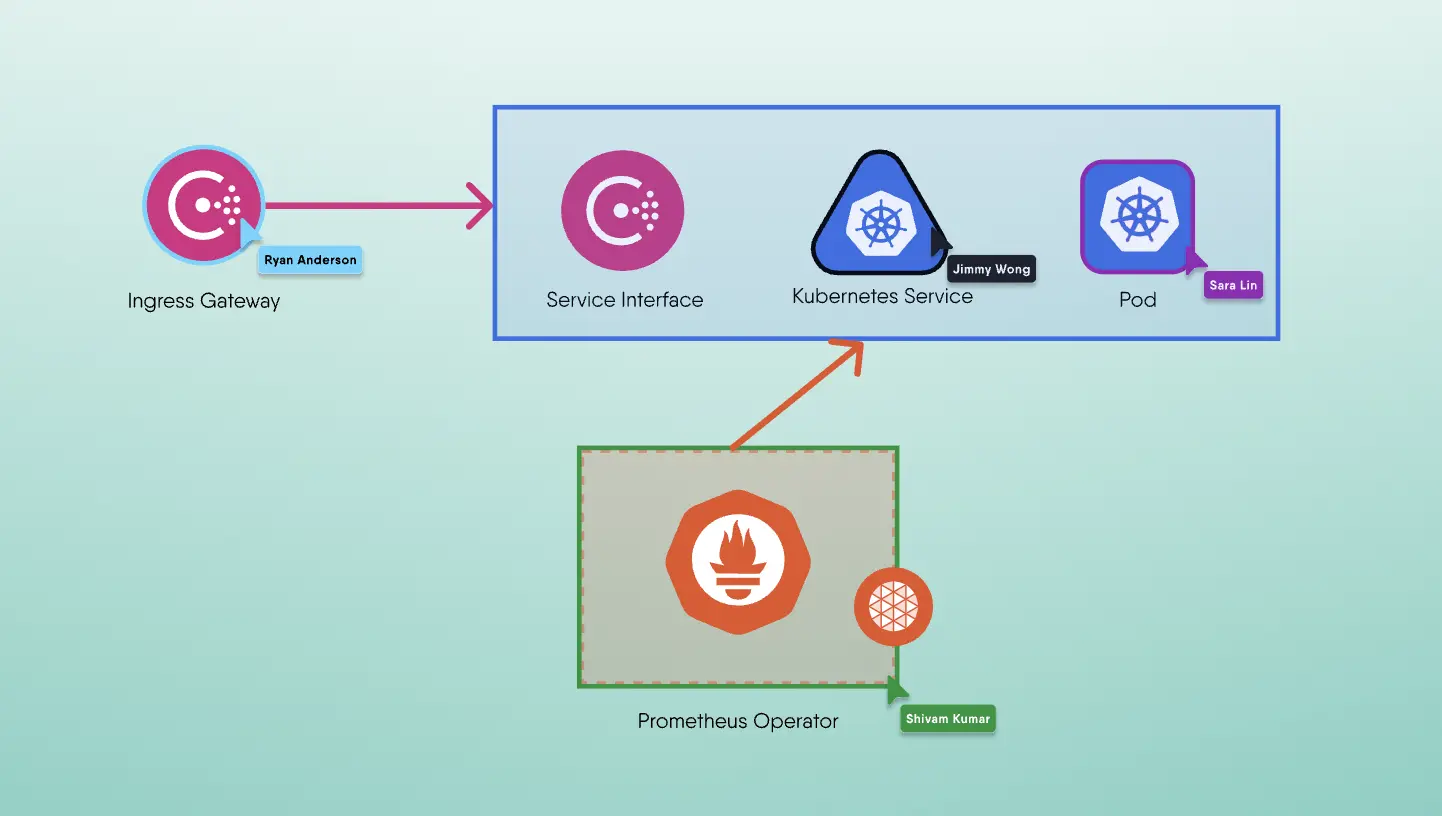

One of the most important distinctions to make when it comes to service meshes is that API gateways are designed to accept traffic from outside your organization/network and distribute it internally. API gateways expose your services as managed APIs, focused on transiting north/south traffic. They aren’t as well suited for traffic management within the service mesh necessarily, because they require traffic to travel through a central proxy and add a network hop. Service meshes are primarily designed to handle east/west traffic internal to the service mesh.

North-south (N-S) traffic refers to traffic between clients outside the Kubernetes cluster and services inside the cluster, while east-west (E-W) traffic refers to traffic between services inside the Kubernetes cluster.

API gateways and service meshes are frequently deployed in combination due to their complementing nature. Service meshes are on their way to providing much, if not all, of the functionality that API gateways do.

API Management

API gateways work with other API management ecosystem components like API marketplaces and API publishing portals, both of which are surfacing in service mesh offerings. Analytics, business data, adjunct provider services like single sign-on, and API versioning control are all provided by API management solutions. Many API management vendors have migrated their API management systems to a single point of architecture, with API gateways designed to be implemented at the edge.

An API gateway can call downstream services via service mesh by offloading application network functions to the service mesh. Some API management capabilities that are oriented toward developer engagement can overlap with service mesh management planes in the following ways:

- Developers use a portal to discover APIs available for API documentation and discovery, API testing, and exercising their code.

- API analytics for tracking KPIs, generating reports on usage and adoption trending.

- API lifecycle management to secure APIs (allocate keys) and promote or demote APIs.

- Monetization to tracking payment plans and enforcing quotas.