Load Generation

Load generation is a crucial testing technique employed to scrutinize a system's behavior under specific loads. The primary objectives include identifying bottlenecks, measuring performance, and gaining insights into how the system operates under extreme conditions.

Load tests empower developers to:

- Understand the scalability of the application

- Identify and rectify bottlenecks

- Ensure reliability and robustness of the application

- Predict system behavior under varying conditions

Meshery Load Generator

Let's delve into Meshery, a multi-Cloud Native management plane founded by Layer5—a community dedicated to open-source initiatives in network management and observability. Meshery aids developers in assessing the performance of their Cloud Native applications.

Meshery boasts a comprehensive set of features, including:

- Performance testing

- Cloud Native Lifecycle Management

- Configuration management

Supporting various load generators like HTTP/HTTPS/HTTP2, Nighthawk and its built-in load generator, Meshery facilitates the creation of synthetic HTTP load and enables benchmarking the performance of Cloud Native applications. Developers can choose their preferred load generator for tests and benefit from the capability to run tests, providing a more comprehensive performance analysis.

With Meshery, developers can:

- Manage the lifecycle of various Cloud Native applications.

- Conduct performance tests and compare results between across different Cloud Native environments.

- Apply custom or pre-defined configurations to enhance Cloud Native functionality.

Load Balancing

Load balancing is a critical technique that enhances the responsiveness, reliability, and scalability of applications by efficiently distributing incoming network traffic across multiple servers. In this guide, we'll explore various load balancing techniques and algorithms, shedding light on their roles, benefits, and challenges in the context of Kubernetes networking.

Introduction: The Crucial Role of Load Balancing

Load balancing plays a pivotal role in optimizing the performance of internet applications by efficiently managing network traffic. Imagine a grocery store with multiple checkout lines—one open and the others closed. Load balancing is akin to opening all checkout lines,thereby reducing wait times, and improving overall efficiency.

Layer5

How Load Balancing Works:

Load balancing involves three main components:

Load Balancer: Positioned between users and application servers, it monitors incoming traffic and redirects it to the most suitable server. It can be hardware-based or software-based, with the latter running on existing infrastructure.

Application Servers: These servers host applications, receiving traffic from the load balancer and serving requested content to users.

Users: End-users access applications through the internet or intranet.

When a user initiates a request, the load balancer evaluates factors such as server capacity, usage rate, and response time to determine the optimal server. This process enhances efficiency and reduces user wait times.

Benefits of Load Balancers: Performance, Reliability, and Scalability

Improved Performance: By distributing workloads evenly, response time and system latency are reduced.

Increased Reliability: Fault tolerance is enhanced by detecting and removing failed or unhealthy servers,redirecting traffic to functional servers.

Enhanced Scalability: The system's capacity can be horizontally scaled by adding more servers without impacting existing ones.

Reduced Costs: Efficient resource utilization avoids underutilization or overprovisioning of servers.

Load Balancing with Meshery

Meshery serves as a platform for managing various Cloud Native applications . While it doesn't directly perform load balancing directly, Meshery allows users to configure and observe load balancing within their chosen Cloud Native Adaptors. Through a unified dashboard, users can customize load balancing settings, monitor relevant metrics, and leverage observability tools to gain insights into the performance and health of load balancing features. Meshery's focus is on providing a consistent and user-friendly interface for Cloud Native Lifecycle Management, including load balancing configuration.

Adapters

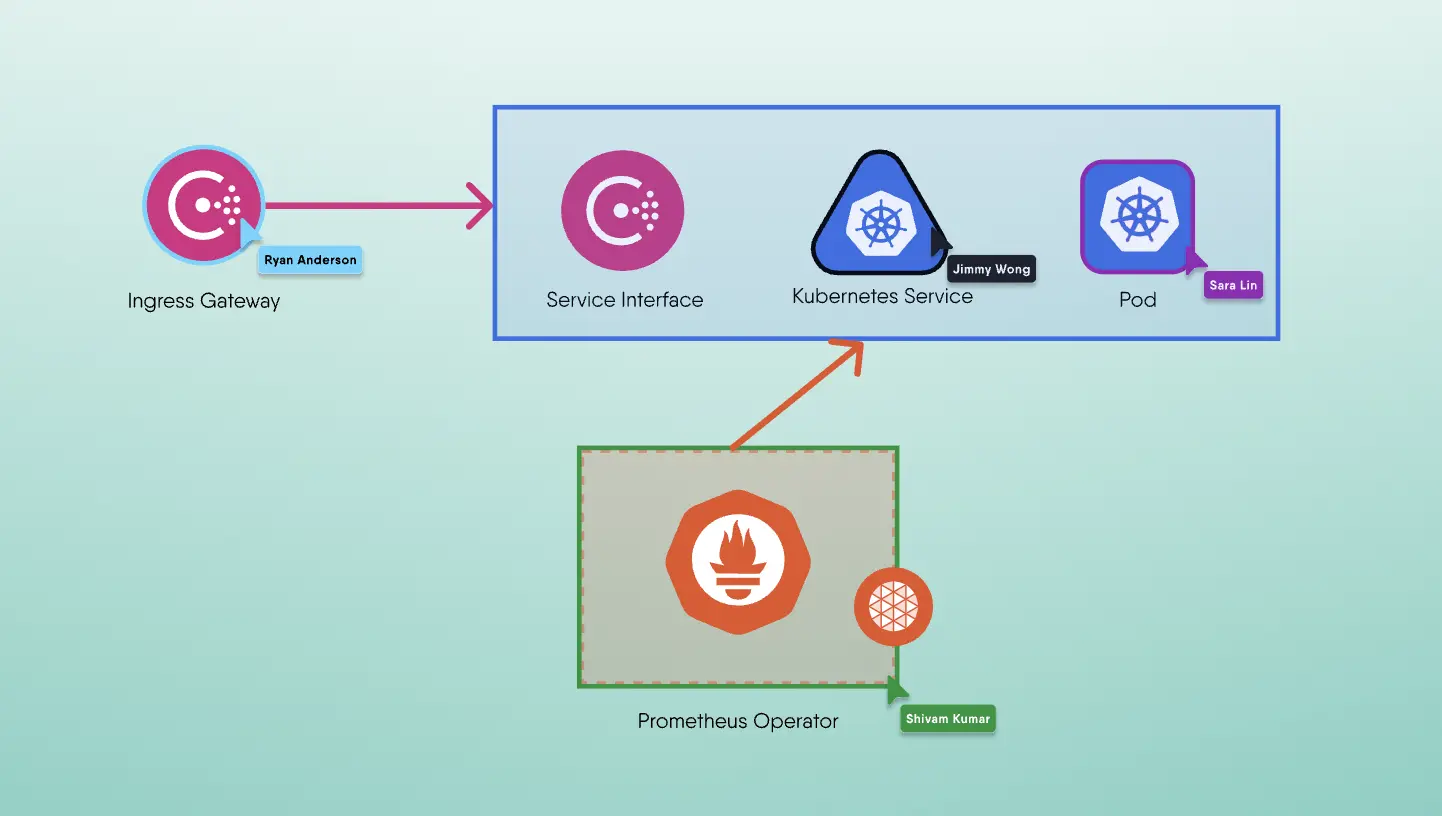

Types of Load Balancers in Kubernetes: Service, Ingress, and External

Service Load Balancing: This basic Kubernetes tactic routes all requests to a service and directs them to matching pods. It's suitable for cluster-internal traffic but lacks advanced features like SSL termination.

Ingress Load Balancing: Handling external traffic, it routes requests based on rules defined in an ingress resource. It offers more flexibility and functionality, including SSL termination and authentication.

External Load Balancing: Involving external load balancers from cloud providers (AWS ELB, Google Cloud Load Balancer), it provides layer-4 or layer-7 load balancing and high availability. However, it adds complexity and additional costs.

Challenges of Load Balancing

Despite its advantages, load balancing comes with challenges such as the need for careful configuration to address specific application requirements and potential complexities in managing dynamic workloads.

Load Balance Algorithms: Static and Dynamic

Static Load Balancing Algorithms:

- Round Robin: Distributes requests sequentially across servers.

- Hash: Distributes requests based on a key, such as client IP address or request URL.

- Random: Distributes requests randomly.

Dynamic Load Balancing Algorithms:

Least Connections: Directs requests to the server with the fewest active connections.

Least Response Time: Considers active connections and average response time to determine the optimal server.

Least Bandwidth: Routes requests to the server with the least traffic.

Choosing the Right Load Balancer: Tips and Considerations

Consider factors like application needs, performance requirements, and scalability when selecting a load balancer—whether hardware, software, or a combination.

Conclusion: Empowering Applications with Optimal Load Balancing

Load balancing is a powerful technique that significantly impacts the performance, reliability, and scalability of applications. Whether distributing workloads based on static or dynamic algorithms, choosing the right load balancing strategy is crucial for delivering an exceptional user experience and optimizing resources. As technologies evolve, staying informed about the latest load balancing practices ensures continuous improvement in application delivery.